Topic modeling vs. cluster analysis: What’s the difference?! June 13, 2017 - Blogs on Text Analytics

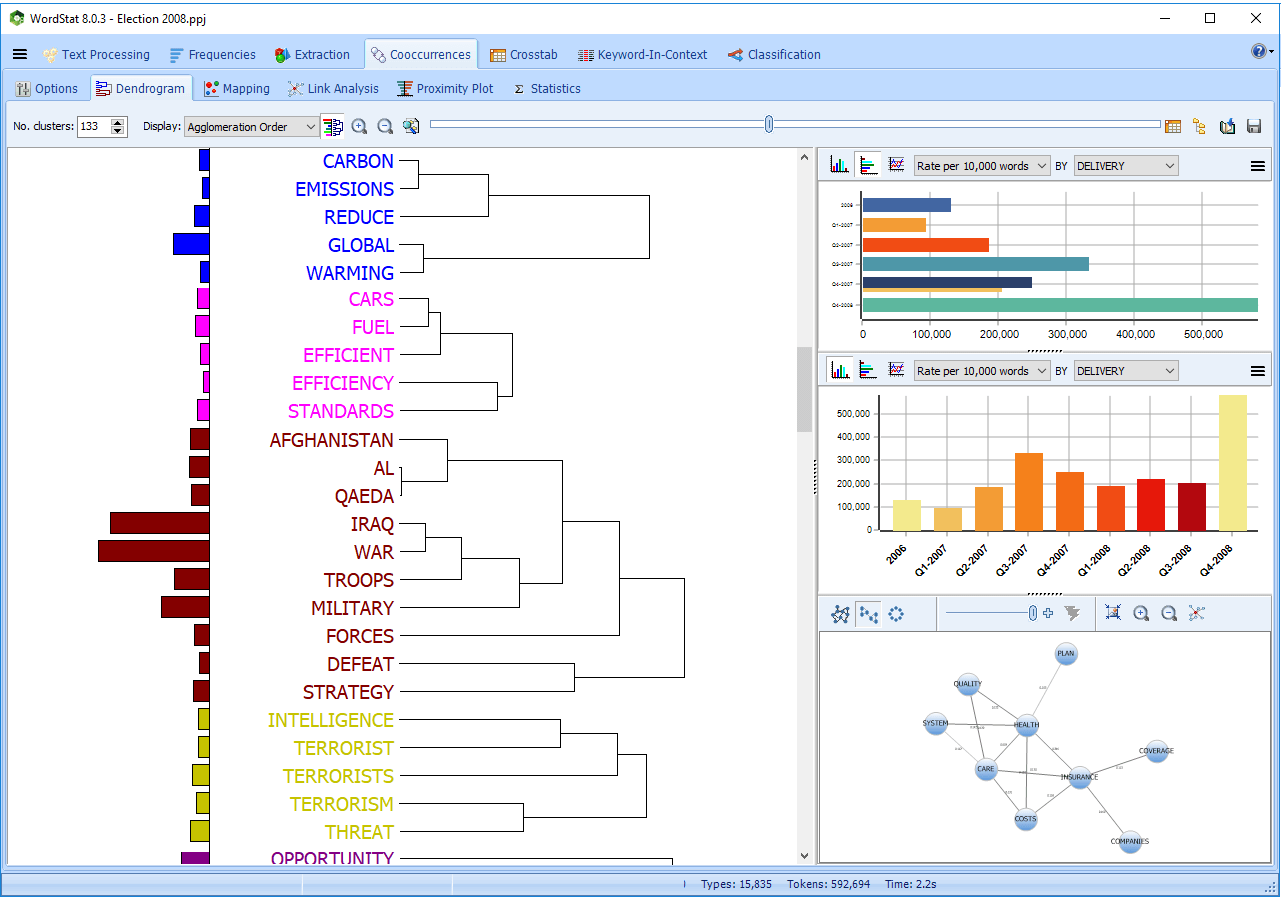

Alright, so you have a huge pile of documents and you want to find mysterious patterns you believe are hidden within! A challenging task, but you are lucky because you have WordStat in your arsenal. That means, you have at least two options: 1) topic modeling, or 2) cluster analysis to find patterns and groupings in your data. But which one you should use? In this post, we will help you choose by highlighting some of the differences between topic modeling and clustering approaches.

As we explained in our previous post about topic modeling, a topic can be defined by a set of keywords with each keyword in the set having a probability of occurrence for the subject topic. Different topics have their own sets of keywords with corresponding probabilities and topics may share some keywords, but most likely with different probabilities. Easy, right?! Oh, I forgot to mention that a document in your corpus can be associated with more than one topic. There are a wide set of different approaches to choose for discovering hidden topics, but in general, in topic modeling we deal with uncovering the topics through calculating conditional probabilities of the topics given the words in the documents. No matter what approach you select, in topic modeling you will end up with a list of topics, each containing a set of associated keywords.

Things are slightly different in clustering! Here, the algorithm clusters documents into different groups based on a similarity measure. One way would be transforming the documents to a numeric vector containing the weights assigned to words in the given document. I know you are thinking about tf-idf, yes this is one way to do it. The clustering technique applies the similarity measure to the numeric vectors to group the documents. Basically, each document will show up in one cluster[1]. The final output would be a list of clusters along with their members.

Okay, what’s the difference then? Well, in topic modeling you extract topics out of the documents, so you may think about it like transforming to a much smaller data space, that is the topic space, since the number of the extracted topics is much less than the document collection and its vocabulary. In (hard) clustering, the final output contains a set of clusters each including a set of documents.

[1] There are fuzzy (soft) clustering techniques where a data point can belong to more than one cluster, but here we discuss the basic idea and we only focus on hard clustering.